Price changes happen fast. A competitor drops their price by 15% at 2 AM, and you won't know until a customer tells you. Manual monitoring doesn't scale, and by the time you catch up, the damage is done. Building a price monitoring scraper solves this by pulling pricing data automatically across multiple sources, so you always have an accurate picture without the manual work. Proxies keep the whole thing running without interruptions by rotating your IP, so target websites never flag your requests.

In this article, we'll explore how to build a price monitoring scraper with proxies from scratch.

Setting Up Your Price Monitoring Scraper

Python is the go-to choice here. You'll need two libraries: Requests for sending HTTP requests and BeautifulSoup for parsing the HTML and pulling out the price data. Install both with pip, and you're ready to go.

- Send a GET request to the product page you want to monitor.

- Pass the HTML response to BeautifulSoup and locate the element that contains the price.

- Prices sit inside a span or div with a specific class you can target directly.

- Pull the text, strip the currency symbol, and you have your price.

Your scraper works at this point, but most websites track request frequency and will block you after a few hits, which is where proxies come in.

Also Read: How People Use Shared Proxies

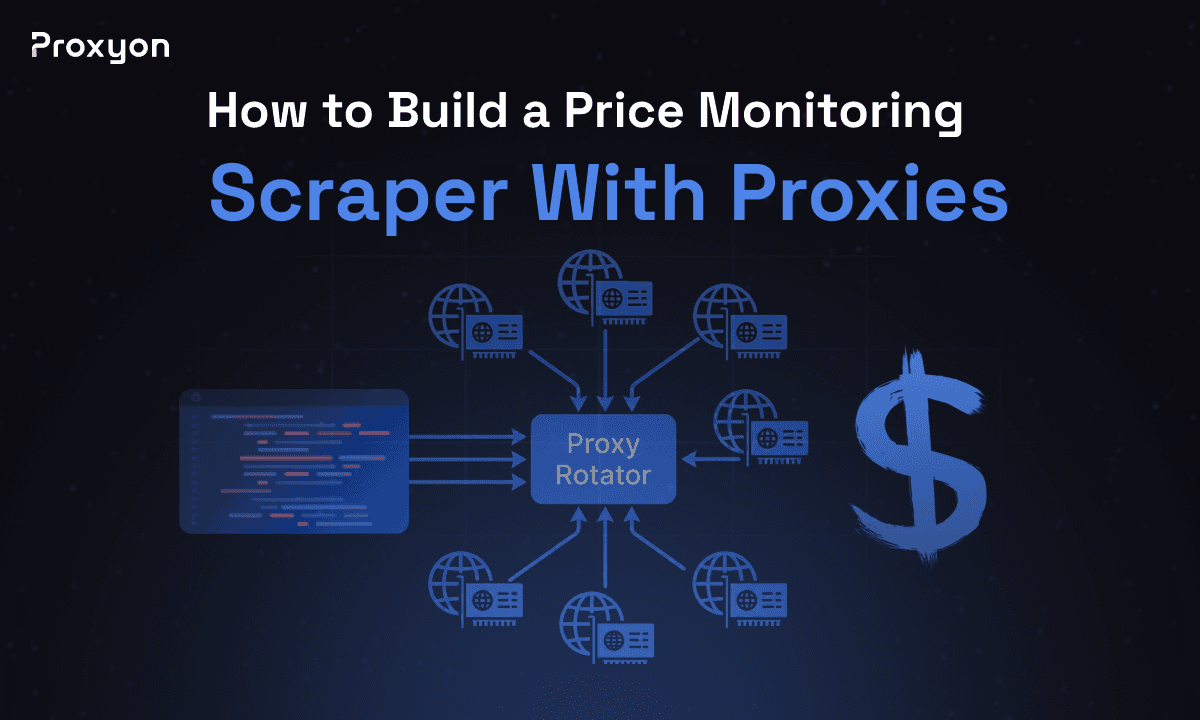

Adding Rotating Proxies to Avoid Blocks

Sending hundreds of requests from the same IP gets you blocked fast. Rotating proxies fix this by switching your IP with every request, so the target site never sees the same source twice.

Residential proxies are the most reliable option for price monitoring. They use real IPs assigned by ISPs, so websites treat your requests like normal user traffic. Datacenter proxies are cheaper and work fine on sites without aggressive bot detection, but if you're scraping major retailers like Amazon or Walmart, residential proxies are worth the extra cost.

Most providers give you a single endpoint URL that handles rotation automatically. You just pass it into your request like this:

1proxies = {

2 "http": "http://user:pass@proxy.provider.com:port",

3 "https": "http://user:pass@proxy.provider.com:port"

4}

5response = requests.get(url, proxies=proxies)Storing and Automating Price Tracking

A CSV file works fine for a small number of products. For anything larger, SQLite is a better call. Each time your scraper runs, it writes the product name, price, and timestamp, giving you a clean history you can query whenever you need it.

A cron job on Linux or Mac runs the scraper automatically. On Windows, Task Scheduler does the same job.

BASH: 0 * * * * /usr/bin/python3 /path/to/your/scraper.py

You can also add email or Slack alerts that fire whenever a price drops below a certain threshold, so you're not checking the data manually either.

Also Read: Everything You Need To Know About Private Proxies

Final Thoughts

A few lines of Python pull the data, rotating proxies keep your requests from getting blocked, and a simple scheduler runs the whole thing hands-free. Get the setup right once, and you'll always have accurate pricing data without lifting a finger.